Introduction

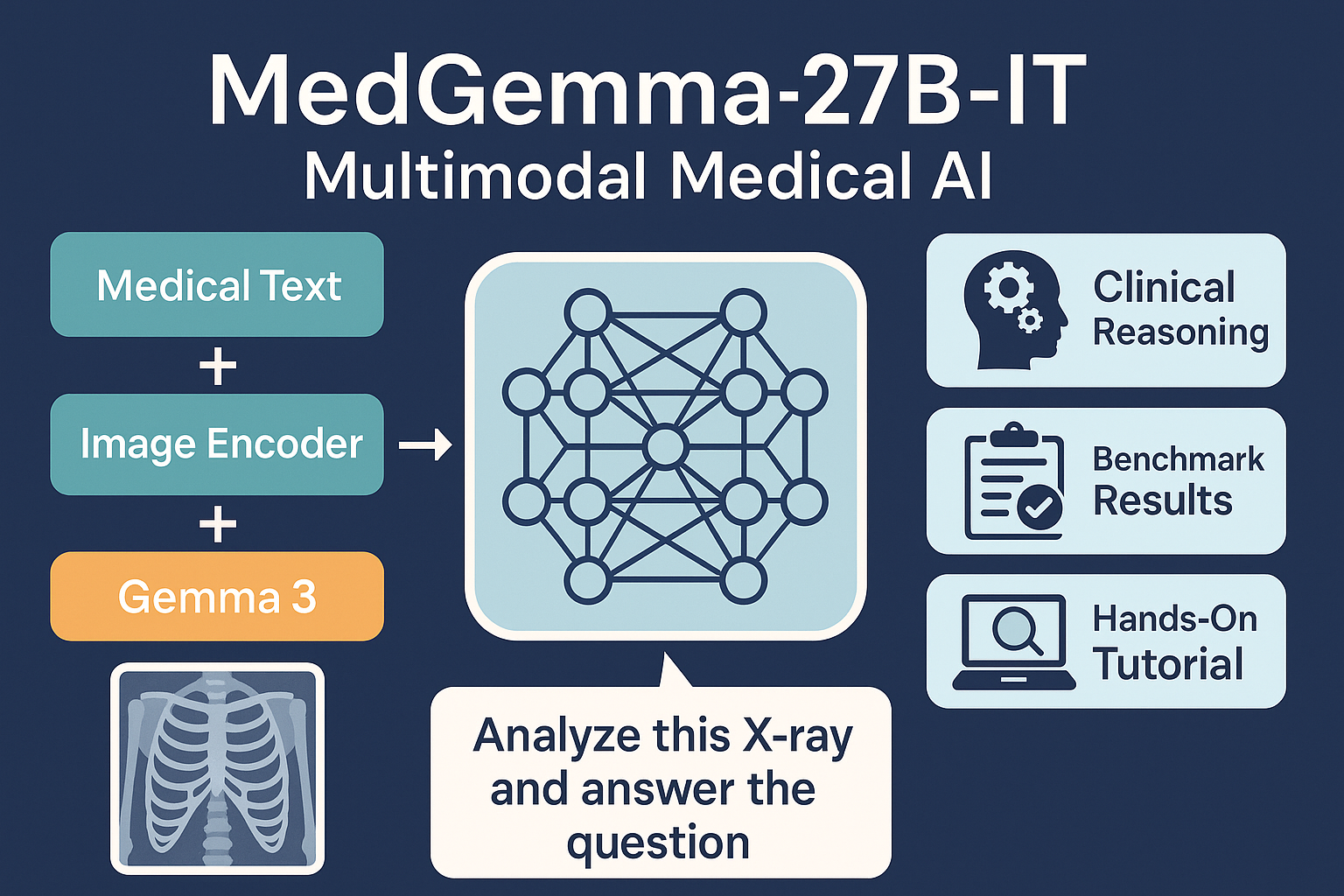

The healthcare industry stands at the precipice of a revolutionary transformation, powered by artificial intelligence that can understand and analyze medical data with unprecedented precision. MedGemma-27B-IT represents Google’s most advanced open-source medical AI model, built on the Gemma 3 architecture and specifically trained for medical text and image comprehension. This comprehensive guide explores how this 27-billion parameter model is reshaping medical AI applications and what it means for healthcare professionals, researchers, and developers.

MedGemma-27B-IT is part of Google’s Health AI Developer Foundations initiative, offering developers a powerful starting point for building sophisticated healthcare applications. Unlike general-purpose AI models, MedGemma has been meticulously trained on diverse medical datasets, making it exceptionally capable of understanding clinical contexts, medical terminology, and healthcare-specific reasoning patterns.

MedGemma Available Variants

The MedGemma is available in three distinct model variants, each tailored for different healthcare AI tasks, depending on scale and modality:

MedGemma 4B Multimodal

A lightweight, multimodal model designed for entry-level or resource-constrained medical AI applications.

- Model Size: 4 billion parameters

- Modality: Text + Images

- Versions:

- Pre-trained (

-pt) - Instruction-tuned (

-it)

- Pre-trained (

- Use Case: Suitable for prototyping or applications needing medical image and text understanding on smaller infrastructure.

MedGemma 27B Text-Only

A large-scale, text-focused model optimized for deep medical reasoning and text comprehension.

- Model Size: 27 billion parameters

- Modality: Text-only

- Version: Instruction-tuned only

- Use Case: Ideal for tasks involving clinical documentation, question answering, summarization, and any healthcare-related NLP application that doesn’t require image inputs.

MedGemma 27B Multimodal

A powerful multimodal model combining vision and language capabilities with structured data understanding.

- Model Size: 27 billion parameters

- Modality: Text + Images + FHIR-based medical records

- Version: Instruction-tuned only

- Use Case: Best suited for comprehensive healthcare AI applications that involve radiology, dermatology, histopathology, electronic health record (EHR) data, and clinical report comprehension.

MedGemma Model Selection Guidance

| Use Case | Recommended Model |

|---|---|

| Need support for both text, records, and medical images | MedGemma 27B Multimodal |

| Focused only on medical text tasks | MedGemma 27B Text-Only |

| Lightweight, multimodal experimentation or small-scale applications | MedGemma 4B Multimodal |

What is MedGemma-27B-IT?

MedGemma-27B-IT is an instruction-tuned, 27-billion-parameter large language model designed for medical image and text understanding. Unlike traditional text-only LLMs, this model processes both patient images (like chest X-rays, pathology slides) and clinical narratives to provide rich, contextual outputs.

Architecture Overview

- Base model: Gemma 3 (27B params)

- Image encoder: Fine-tuned SigLIP (for high-resolution medical images)

- Instruction tuning: Brazilian-compatible format, focused on medical instruction-following

- Modality support:

text-to-text: Pure language use (e.g., clinical QA)image-text-to-text: Multimodal (e.g., “Analyze this X-ray and answer the question”)

The instruction-tuned version (IT) has been specifically designed to follow complex medical instructions and provide clinically relevant responses. This model supports long context understanding of at least 128,000 tokens, enabling it to process extensive medical documents, patient records, and research papers.

Key Features and Specifications of MedGemma-27B-IT

Technical Specifications:

- Model Size: 27 billion parameters

- Architecture: Decoder-only transformer with grouped-query attention (GQA)

- Context Length: 128,000+ tokens

- Input Modalities: Text and medical images (multimodal version)

- Output Modality: Text generation up to 8,192 tokens

- Training Data: Diverse medical datasets including MIMIC-CXR, FHIR records, and proprietary medical data

Multimodal Image Encoder: SigLIP

The 27B multimodal variant integrates a SigLIP image encoder fine-tuned on de-identified medical images, including:

- Chest X-rays

- Dermatology visuals

- Ophthalmology scans

- Histopathology slides

This enables vision-language understanding tailored to clinical workflows.

Benchmarks and Performance

MedGemma-27B-IT (text-only variant) outperforms most open models on clinical QA benchmarks:

| Benchmark | Score (%) | Improvement Over Baseline |

|---|---|---|

| MedQA | 89.8 | +2.1 over Gemma‑3 |

| MedMCQA | 74.2 | +11.6 over base model |

| PubMedQA | 76.8 | +10.3 over base model |

For image + text tasks, human evaluations show high reliability in radiological descriptions and dermatological observations.

Hands-On Tutorial – Using MedGemma-27B-IT

This tutorial walks you through loading Google’s MedGemma-27B-IT, running text + image inference, and generating radiology-style responses locally or in Colab.

1: Install Required Libraries

!pip install --upgrade --quiet accelerate bitsandbytes transformers2: Setup & Model Configuration

Select your model variant and configuration:

from transformers import BitsAndBytesConfig

import torch

# Choose from: "4b-it", "27b-it", "27b-text-it"

model_variant = "4b-it"

model_id = f"google/medgemma-{model_variant}"

use_quantization = True # Enable 4-bit quantization to save memory

is_thinking = False # Enable thinking mode (only for 27B variants)

model_kwargs = dict(

torch_dtype=torch.bfloat16,

device_map="auto"

)

if use_quantization:

model_kwargs["quantization_config"] = BitsAndBytesConfig(load_in_4bit=True)

thinking modeadds silent reasoning steps, supported only in27bvariants.

3: Load Model & Pipeline

from transformers import pipeline

if "text" in model_variant:

pipe = pipeline("text-generation", model=model_id, model_kwargs=model_kwargs)

else:

pipe = pipeline("image-text-to-text", model=model_id, model_kwargs=model_kwargs)

pipe.model.generation_config.do_sample = False4: Load Model Components

if "text" in model_variant:

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained(model_id, **model_kwargs)

tokenizer = AutoTokenizer.from_pretrained(model_id)

else:

from transformers import AutoModelForImageTextToText, AutoProcessor

model = AutoModelForImageTextToText.from_pretrained(model_id, **model_kwargs)

processor = AutoProcessor.from_pretrained(model_id)5: Load a Sample Medical Image

import os

from PIL import Image

from IPython.display import Image as IPImage, display, Markdown

# Prompt and image URL

prompt = "Describe this X-ray"

image_url = "https://upload.wikimedia.org/wikipedia/commons/c/c8/Chest_Xray_PA_3-8-2010.png"

# Download and open the image

!wget -nc -q {image_url}

image_filename = os.path.basename(image_url)

image = Image.open(image_filename)6: Prepare Chat Input

role_instruction = "You are an expert radiologist."

system_instruction = (

f"SYSTEM INSTRUCTION: think silently if needed. {role_instruction}"

if "27b" in model_variant and is_thinking

else role_instruction

)

max_new_tokens = 1300 if is_thinking else 300

messages = [

{"role": "system", "content": [{"type": "text", "text": system_instruction}]},

{"role": "user", "content": [{"type": "text", "text": prompt}, {"type": "image", "image": image}]}

]7: Run Inference

output = pipe(text=messages, max_new_tokens=max_new_tokens)

response = output[0]["generated_text"][-1]["content"]Display result:

display(Markdown(f"---\n\n**[ User ]**\n\n{prompt}"))

display(IPImage(filename=image_filename, height=300))

if "27b" in model_variant and is_thinking:

thought, response = response.split("<unused95>")

thought = thought.replace("<unused94>thought\n", "")

display(Markdown(f"---\n\n**[ MedGemma thinking ]**\n\n{thought}"))

display(Markdown(f"---\n\n**[ MedGemma ]**\n\n{response}\n\n---"))Alternate Inference: Use generate() Directly

inputs = processor.apply_chat_template(

messages,

add_generation_prompt=True,

tokenize=True,

return_dict=True,

return_tensors="pt",

).to(model.device, dtype=torch.bfloat16)

input_len = inputs["input_ids"].shape[-1]

with torch.inference_mode():

generation = model.generate(**inputs, max_new_tokens=max_new_tokens, do_sample=False)

generation = generation[0][input_len:]

response = processor.decode(generation, skip_special_tokens=True)Output

display(Markdown(f"---\n\n**[ User ]**\n\n{prompt}"))

display(IPImage(filename=image_filename, height=300))

if is_thinking:

thought, response = response.split("<unused95>")

thought = thought.replace("<unused94>thought\n", "")

display(Markdown(f"---\n\n**[ MedGemma thinking ]**\n\n{thought}"))

display(Markdown(f"---\n\n**[ MedGemma ]**\n\n{response}\n\n---"))Important Links

More Details: Huggingface

Models Collection: Check Here

Quick Start and fine-tune notebooks: Click Here

Conclusion

MedGemma-27B-IT represents a significant milestone in medical artificial intelligence, offering healthcare professionals and researchers unprecedented capabilities for medical text and image analysis. As a 27-billion parameter model optimized for tasks requiring deep medical text comprehension and clinical reasoning, MedGemma-27B-IT provides a powerful foundation for developing sophisticated healthcare applications.

The model’s success lies not just in its technical capabilities but also in its thoughtful design for healthcare applications. From electronic health record processing to clinical decision support, MedGemma-27B-IT offers a comprehensive solution for medical AI development. However, its true value emerges when it is properly validated, adapted, and integrated into healthcare workflows with appropriate human oversight.

🚀 Want to know more about my journey in AI, tech tutorials, and digital exploration? Learn more about me here 👤 and follow my latest insights on Medium 📝 for in-depth articles, and feel free to connect with me on LinkedIn 🔗.

Md Monsur Ali is a tech writer and researcher specializing in AI, LLMs, and automation. He shares tutorials, reviews, and real-world insights on cutting-edge technology to help developers and tech enthusiasts stay ahead.