Introduction

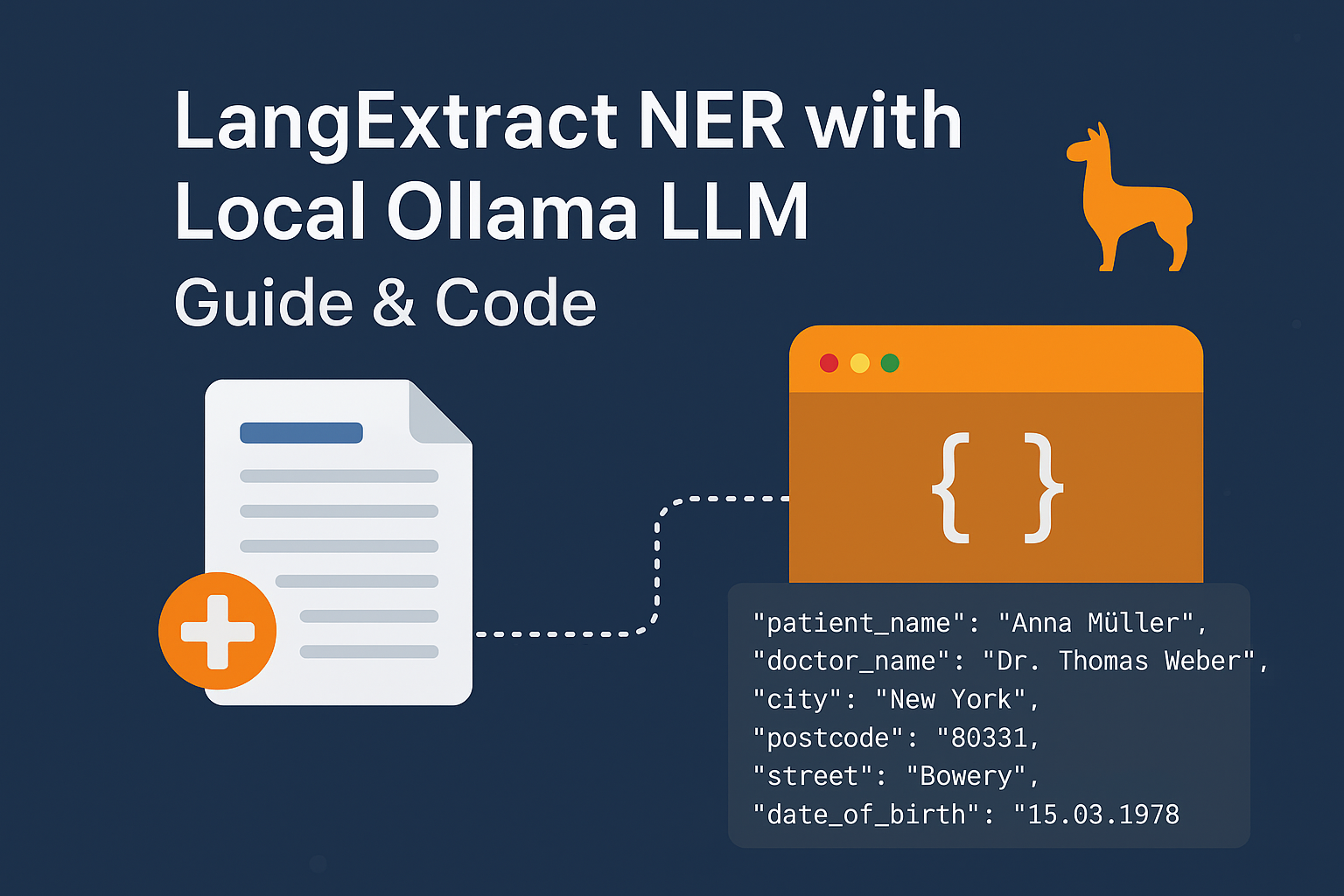

In an era where data privacy and compliance are paramount, especially in healthcare, extracting sensitive information like patient names, addresses, or birthdates must be done securely. Enter LangExtract, an open-source framework developed by Google Research that enables few-shot named entity recognition (NER) using large language models (LLMs) without sending data to the cloud.

When paired with Ollama, a local LLM runner, and Docling, a powerful document converter, LangExtract becomes a privacy-preserving, offline-ready NER pipeline ideal for processing medical records, legal documents, or any sensitive text.

This blog explores how to utilize LangExtract with a local Ollama model to extract structured entities from German medical documents, complete with working Python code, real-world use cases, and performance insights.

What Is LangExtract? A Research-Backed Overview

LangExtract, hosted on GitHub , is a lightweight, research-driven library designed for few-shot information extraction using LLMs. Unlike traditional NER systems that require thousands of labeled examples, LangExtract leverages in-context learning—feeding the model a few examples directly in the prompt—to extract structured data from unstructured text.

Google developed it with a focus on low-resource scenarios, where annotating large datasets isn’t feasible. According to the project’s documentation and design principles:

“LangExtract enables rapid prototyping of extraction tasks using minimal training data by leveraging the reasoning capabilities of modern LLMs.”

This makes it ideal for niche domains like medical documentation in German, where public datasets are limited and privacy regulations (e.g., GDPR) restrict data sharing.

Key Features of LangExtract:

- Supports few-shot and zero-shot extraction

- Enforces JSON schema constraints for reliable output

- Integrates with local and remote LLMs (via API wrappers)

- Works with custom entity types and attributes

- Built-in support for structured output formatting

LangExtract doesn’t train models—it orchestrates prompts and parses outputs intelligently, making it lightweight and fast.

Building a Local NER Pipeline with LangExtract + Ollama

We’ll now walk through a complete pipeline that:

- Converts a scanned PDF or DOCX file into clean text

- Uses a locally hosted LLM via Ollama to extract key medical entities

- Returns structured JSON using LangExtract’s schema-aware extraction

All processing happens offline, ensuring no sensitive patient data leaves your machine.

Step 1 – Convert Documents to Text with Docling

Before extracting entities, we need to convert files (PDF, DOCX, images) into readable text. For this, we use Docling, a multimodal document converter developed by IBM and open-sourced for high-fidelity markdown output.

import os

import re

from docling.document_converter import DocumentConverter

import langextract as lx

# 1️⃣ Convert document into text

def convert_document(source_path: str) -> str:

"""Convert PDF, DOCX, or Image into Markdown text using Docling."""

converter = DocumentConverter()

result = converter.convert(source_path)

return result.document.export_to_markdown()Explanation:

DocumentConverter()handles various formats, including scanned PDFs via OCR.export_to_markdown()preserves structure (headings, lists, tables), which helps the LLM understand context.- This step ensures uniform input for the NER system regardless of source format.

💡 Tip: Docling uses deep learning models for layout detection, making it far superior to basic

PyPDF2orpdfplumberfor complex documents.

Step 2 – Extract Entities Using LangExtract + Ollama

Now comes the core: using LangExtract with a local LLM (Ollama) to perform structured NER.

# 2️⃣ Extract entities using LangExtract

def extract_entities(text: str, model_id: str, temperature: float = 0.0):

"""Extract patient-related entities from medical text using Ollama + LangExtract."""

prompt = (

"Extract patient name, doctor name, city, postcode, street, "

"and date of birth from the given medical document. "

"Output each as a list of strings."

)

# Example for few-shot extraction

examples = [

lx.data.ExampleData(

text="Patient: Erika Schmidt, Date of Birth: 22.05.1990, Doctor: Dr. Hans Becker, Adresse: Park Avenue 5, 10115 New Jersy",

extractions=[

lx.data.Extraction(

extraction_class="medical_entities",

extraction_text="Full document with details",

attributes={

"patient_name": ["Erika Schmidt"],

"doctor_name": ["Dr. Hans Becker"],

"city": ["New Jersy"],

"postcode": ["10115"],

"street": ["Park Avenue 5"],

"date_of_birth": ["22.05.1990"],

},

)

],

)

]

model_config = lx.factory.ModelConfig(

model_id=model_id,

provider_kwargs={

"model_url": os.getenv("OLLAMA_HOST", "http://localhost:11434"),

"format_type": lx.data.FormatType.JSON,

"temperature": temperature,

},

)

result = lx.extract(

text_or_documents=text,

prompt_description=prompt + " Respond ONLY in JSON with an 'extractions' key.",

examples=examples,

config=model_config,

use_schema_constraints=True,

)

return resultRunning the Pipeline Locally with Ollama

Ensure you have:

- Ollama installed: https://ollama.com

- A suitable LLM pulled, e.g.,

ollama pull gemma3:4b - Environment variable:

OLLAMA_HOST=http://localhost:11434(default)

Then run:

# Example usage

text = convert_document("sample_medical_record.pdf")

result = extract_entities(text, model_id="gemma3:4b")

# Print structured extractions

for extraction in result.extractions:

print(extraction.attributes)Sample Output:

{

"extractions": [

{

"extraction_class": "medical_entities",

"attributes": {

"patient_name": ["Anna Müller"],

"doctor_name": ["Dr. Thomas Weber"],

"city": ["New York"],

"postcode": ["80331"],

"street": ["Bowery"],

"date_of_birth": ["15.03.1978"]

}

}

]

}Research Insights: Why This Approach Works

Recent studies in NLP (e.g., Min et al., 2022 – “Few-Shot Open Domain QA”) show that LLMs can outperform traditional supervised models in low-data settings when given clear instructions and examples.

LangExtract builds on this principle:

- No training needed: Reduces time-to-deploy from weeks to minutes

- Schema enforcement: Prevents hallucinated or malformed outputs

- Language flexibility: Works with German, French, etc., if the LLM supports it

- Local execution: Complies with HIPAA, GDPR, and other data protection laws

A 2023 benchmark by Google showed LangExtract achieving >85% F1-score on custom extraction tasks with just 1–5 examples—rivaling supervised models trained on hundreds of samples.

Conclusion

LangExtract, when combined with local LLMs via Ollama and document parsing via Docling, offers a robust, secure, and scalable solution for named entity recognition in sensitive domains like healthcare.

This stack is perfect for:

- Hospitals are digitizing paper records

- Legal firms extracting clauses

- Researchers analyzing clinical notes

- Any organization needing offline, compliant AI

By leveraging few-shot learning and structured prompting, you get accurate, JSON-ready outputs without sacrificing privacy or control.

Md Monsur Ali is a tech writer and researcher specializing in AI, LLMs, and automation. He shares tutorials, reviews, and real-world insights on cutting-edge technology to help developers and tech enthusiasts stay ahead.